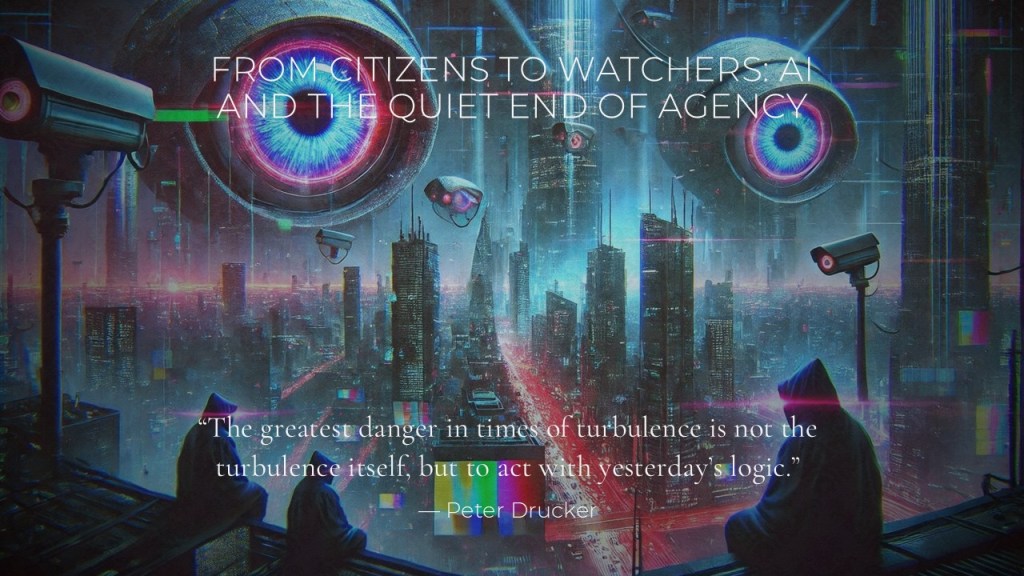

From Citizens to Watchers: AI and the Quiet End of Agency

✍️ Author’s Note

This essay grew out of a series of conversations and reflections on artificial intelligence, politics, and human agency. What concerned me was not the visible spectacle surrounding AI—deepfakes, manipulation, speed—but the quieter shift beneath it: the gradual delegation of judgment itself.

Rather than treating AI as a technical problem to be solved, I have tried to approach it as a civilizational development to be understood historically and philosophically. The question that guided this text was simple but unsettling: what happens to citizenship when thinking, deciding, and responsibility are increasingly optimized away?

This is not a warning against technology as such. It is a reflection on agency—and on the ease with which it can be surrendered.

The public debate on artificial intelligence in politics often focuses on visible dangers: deepfakes, disinformation, electoral interference. These are serious concerns. Yet they risk obscuring a deeper transformation already underway—one that does not announce itself through crisis or spectacle, but through efficiency, convenience, and delegation.

The more consequential shift is not what AI says, but what it quietly decides in advance. Political life is increasingly shaped before citizens arrive—before debate, before judgment, before choice. This is not the loud end of democracy. It is a quiet one.

Agency as an ancient political assumption

From its earliest beginnings, politics presupposed human agency. In the Athenian polis, citizenship meant participation: speech, deliberation, exposure to consequence. Freedom was inseparable from responsibility. To judge was to risk being wrong.

The Roman Republic refined this idea by embedding agency in law and office. Yet Rome also offers an early warning. As administration expanded and power centralized, citizens gradually became subjects. Politics was not abolished; it was professionalized. Governance became something done for people rather than by them. When politics turns into administration, agency does not vanish—it thins, slowly and almost imperceptibly.

Bureaucracy and the promise of rational order

Modern Europe rediscovered this tension with the rise of bureaucracy. Max Weber described bureaucracy as rational, efficient, and predictable, while warning of its tendency to trap individuals in an “iron cage” of procedures. Still, Weber assumed something essential: responsibility remained human. Officials decided. Politicians judged. Accountability could be traced.

Artificial intelligence breaks with this tradition. It does not merely apply rules; it predicts, learns, and optimizes. What bureaucracy achieved through hierarchy, AI achieves through probability. The iron cage no longer needs walls. It operates through dashboards, metrics, and recommendations.

The Enlightenment gamble

The Enlightenment placed its faith in reason—but in human reason. Kant’s call to “dare to know” assumed autonomy, moral struggle, and the inevitability of error. Humanism accepted fallibility as the price of freedom.

AI introduces a rival logic: error minimization, behavioral prediction, continuous optimization. This is not enlightenment reason but instrumental rationality without moral burden. The danger is not tyranny, but infantilization—citizens relieved of judgment in exchange for efficiency and comfort.

A Brief Philosophical Detour

Thinking, Identity, and the Loss of Sovereignty

For centuries, Western self-understanding rested on a powerful assumption: that to think is to be human. When René Descartes wrote cogito, ergo sum, he anchored identity, agency, and moral responsibility in the act of thinking itself.

Artificial intelligence unsettles this foundation. Increasingly, AI systems perform cognitive tasks resembling human thought: pattern recognition, abstraction, inference, self-correction. The difference is not qualitative but quantitative—vast memory, immense speed, near-perfect recall. If thinking is reduced to information processing alone, the distinction begins to blur.

Here Yuval Noah Harari makes a decisive observation. If humans continue to define themselves primarily by their ability to think in words, their identity will collapse. The deeper threat of AI is not intelligence as such, but the erosion of the sovereign space of human thinking—the fragile domain where doubt, hesitation, contradiction, and moral struggle reside.

This sovereignty matters because political agency depends on it. Judgment is not the production of answers; it is the capacity to pause, to weigh competing values, to accept uncertainty and responsibility. AI, by contrast, is designed to minimize hesitation. It optimizes, predicts, and recommends. Thinking shifts from authorship to selection among pre-structured options.

It is often said that AI lacks emotion. Yet this reassurance weakens by the day. AI already writes poetry, articulates grief, simulates empathy, and explains emotions with unsettling fluency. Whether these emotions are “real” or learned representations may remain philosophically interesting; politically it is secondary. What matters is that they function.

When thinking is detached from responsibility, it becomes a technical capacity rather than a moral one.

At that point, the loss is no longer merely political. It is existential. If thinking no longer anchors identity, citizenship itself becomes performative rather than substantive. One may still participate, still vote, still speak—but increasingly as a watcher of outcomes shaped elsewhere.

The danger is not that AI will think like humans.

It is that humans may gradually cease to think as sovereign agents at all.

Soft domination and democratic ritual

Long before the digital age, Alexis de Tocqueville warned of a new form of domination: gentle, administrative, and paternalistic—a power that does not break wills but softens them. AI fulfils this warning with remarkable precision. It does not command; it suggests. It does not forbid; it nudges. Freedom remains formally intact, yet choices are increasingly pre-shaped.

Democracy does not end when elections stop. It ends when decisive moments migrate upstream—beyond public reasoning and shared deliberation. Elections persist, debates continue, parliaments speak. Politics risks becoming commentary on outcomes already produced by systems no one fully governs.

Beyond the Industrial Revolution

The Industrial Revolution displaced labour; it did not displace judgment. Machines replaced muscle, not responsibility. AI accelerates something deeper—the outsourcing of decision-making itself. Once judgment is delegated, societies rarely notice the loss until responsibility has already evaporated.

History suggests that skills can be relearned, institutions reformed, even economies rebuilt. Habits of responsibility, once surrendered, are far harder to reclaim.

Conclusion

The question is not whether AI influences politics. It already does. The question is whether citizens are willing to remain agents rather than watchers—participants in a shared political fate rather than supervised observers of optimized outcomes.

Humanism begins where responsibility is refused to be delegated. Civilizations do not lose agency because it is taken from them, but because—step by step—they give it away.

“The price of freedom is eternal vigilance.”

— Thomas Jefferson

William J J Houtzager, Aka WJJH, February, 2026

📌Blog Excerpt

Artificial intelligence is transforming politics—but not in the way most debates suggest. Beyond disinformation and speed lies a deeper shift: the quiet erosion of human agency.

In From Citizens to Watchers, this essay traces how AI challenges judgment, responsibility, and democratic participation, placing today’s developments within a longer historical and philosophical perspective.

There are several crucial points in your posting about AI and the very real dangers. One, the loss of human agency if we place all responsibility on a machine that somehow automates or imitates thinking… The other point you make about AI, which was already emerging with Google search: “The danger is not tyranny, but infantilization—citizens relieved of judgment in exchange for efficiency and comfort.”

We are seeing this all over the place: interactions are reduced to memes, magical thinking is replacing the hard think… Too many of my friends just wave all concerns away, as if there was nothing one could do…

The laziness that our outsourcing physical labor to machines, as much as that is desirable, because we have a mass society that needs mass solutions, is gaining our thinking, and with it our connection to reality. We live online, outside we are zombie-like… It is a level of alienation that is excruciating. And it will end, I believe, badly.

(Now I get back to work…)

LikeLiked by 1 person

Dear Marton, thank you for your comment and you capture well the unease beneath the surface. What worries me is not so much tyranny as the quiet relief from responsibility. The resignation leads to no decisions at all. In a way, resignation is the perfect environment for the transformation from citizens to watchers. History shows that people rarely lose their agency because it is taken from them. More often, they surrender it willingly—because delegation feels efficient, comfortable, and modern. The danger is not that we become zombies overnight, but that we slowly grow accustomed to watching rather than deciding. And once that habit settles in, it is difficult to reverse. Perhaps there is a deeper meaning to this, the danger is not technology itself, but the moment when people quietly decide that their judgment no longer matters.

W

LikeLike